The Six Pillars

Built by engineers for engineers. No fluff, just a solid memory layer.

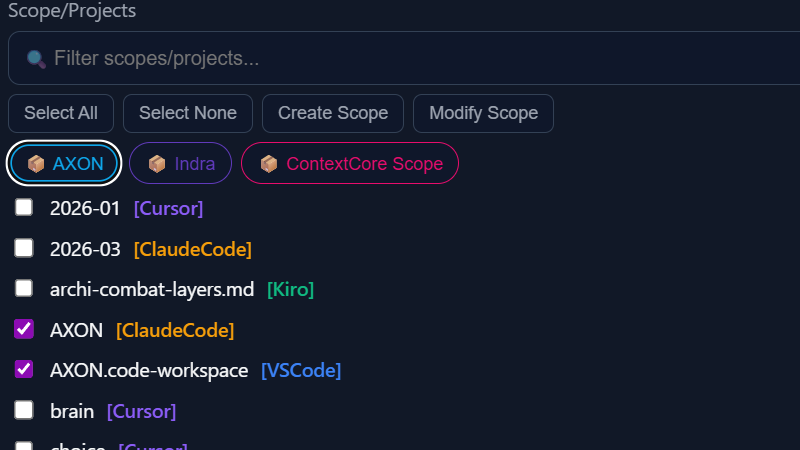

Multi-Harness Ingestion

Reads chat history from Claude Code, Cursor, Open Code, Kiro, and VS Code Copilot. Normalizes everything into a single unified format regardless of IDE.

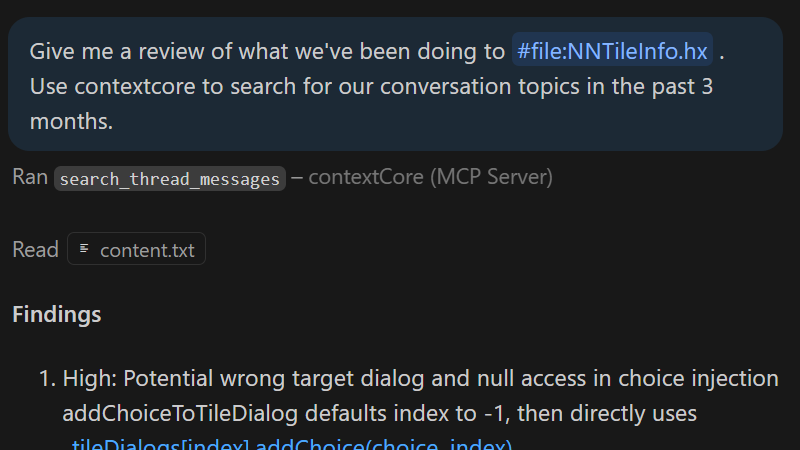

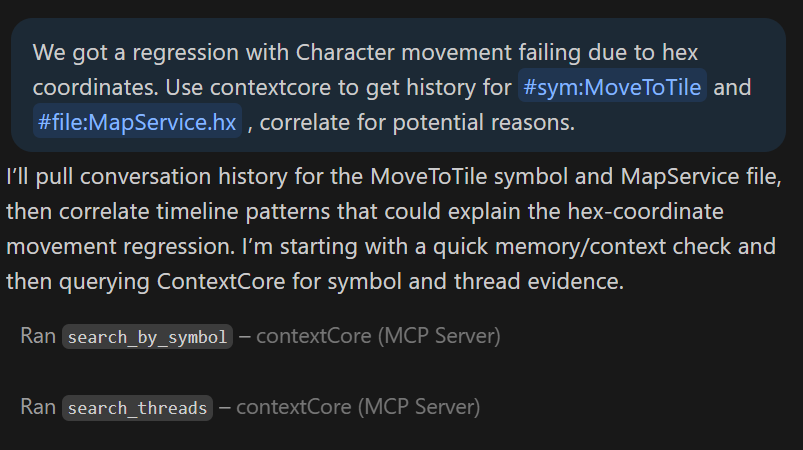

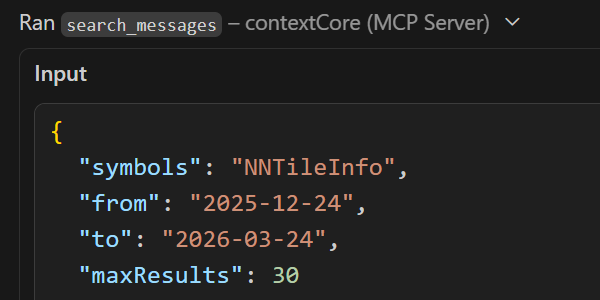

MCP Server

Transforms storage into a live memory layer. Allow any MCP-capable LLM to search your archive and build on prior reasoning automatically.

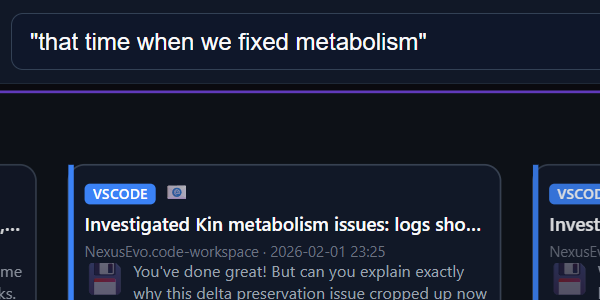

Hybrid Search

Fuzzy lexical search (Fuse.js) combined with semantic vector search (Qdrant). Find conversations by exact phrases or underlying meaning.

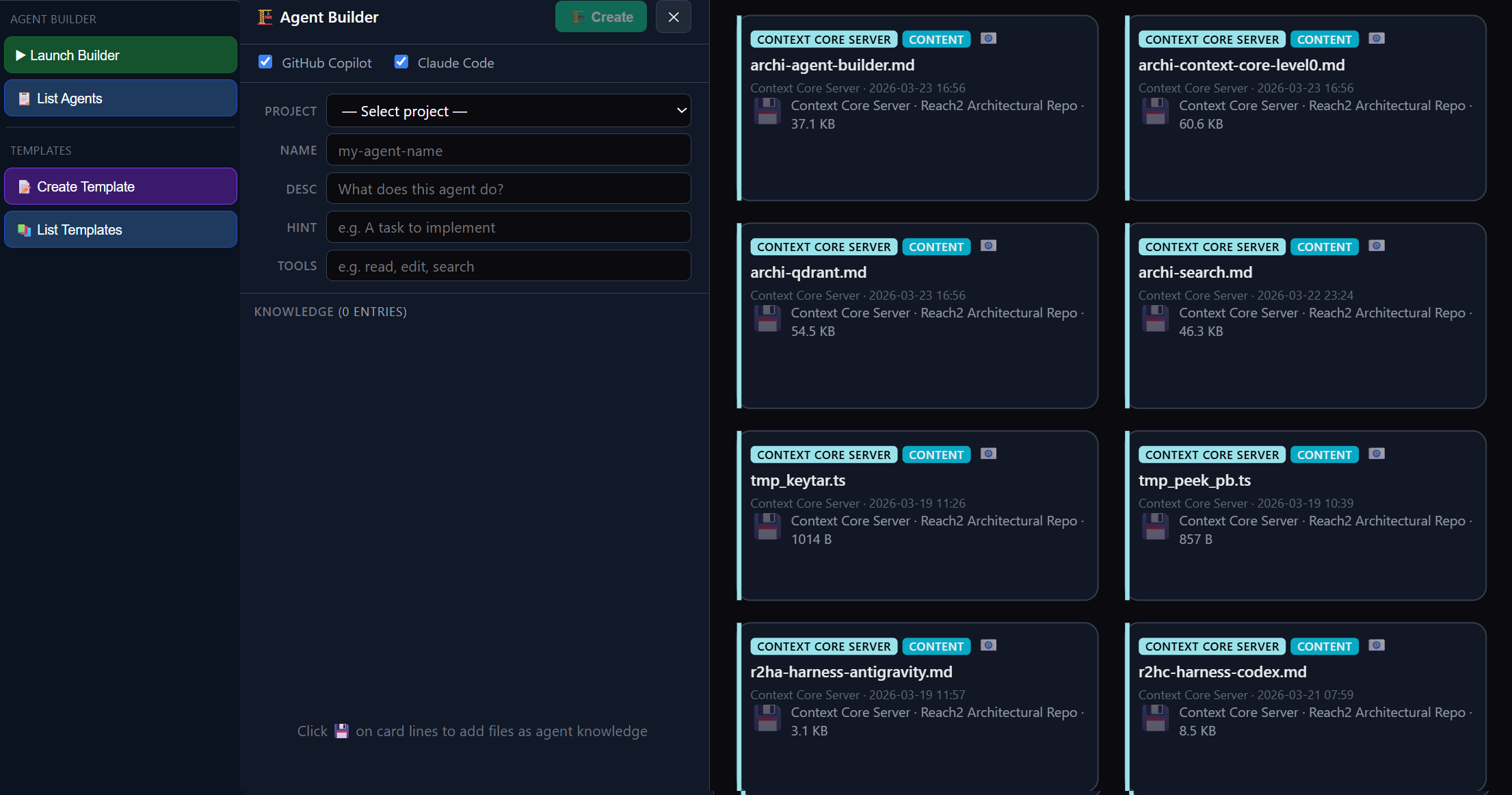

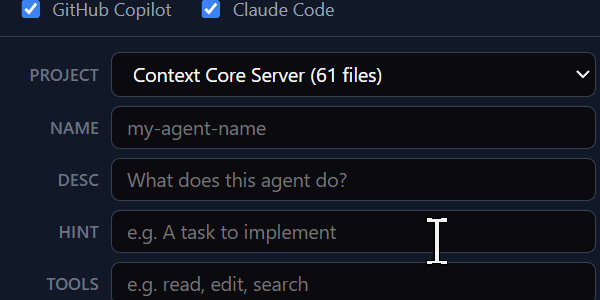

Agent Builder

Compose and edit reusable AI agents from indexed architecture docs, upgrade notes, and code files directly in the visualizer UI.

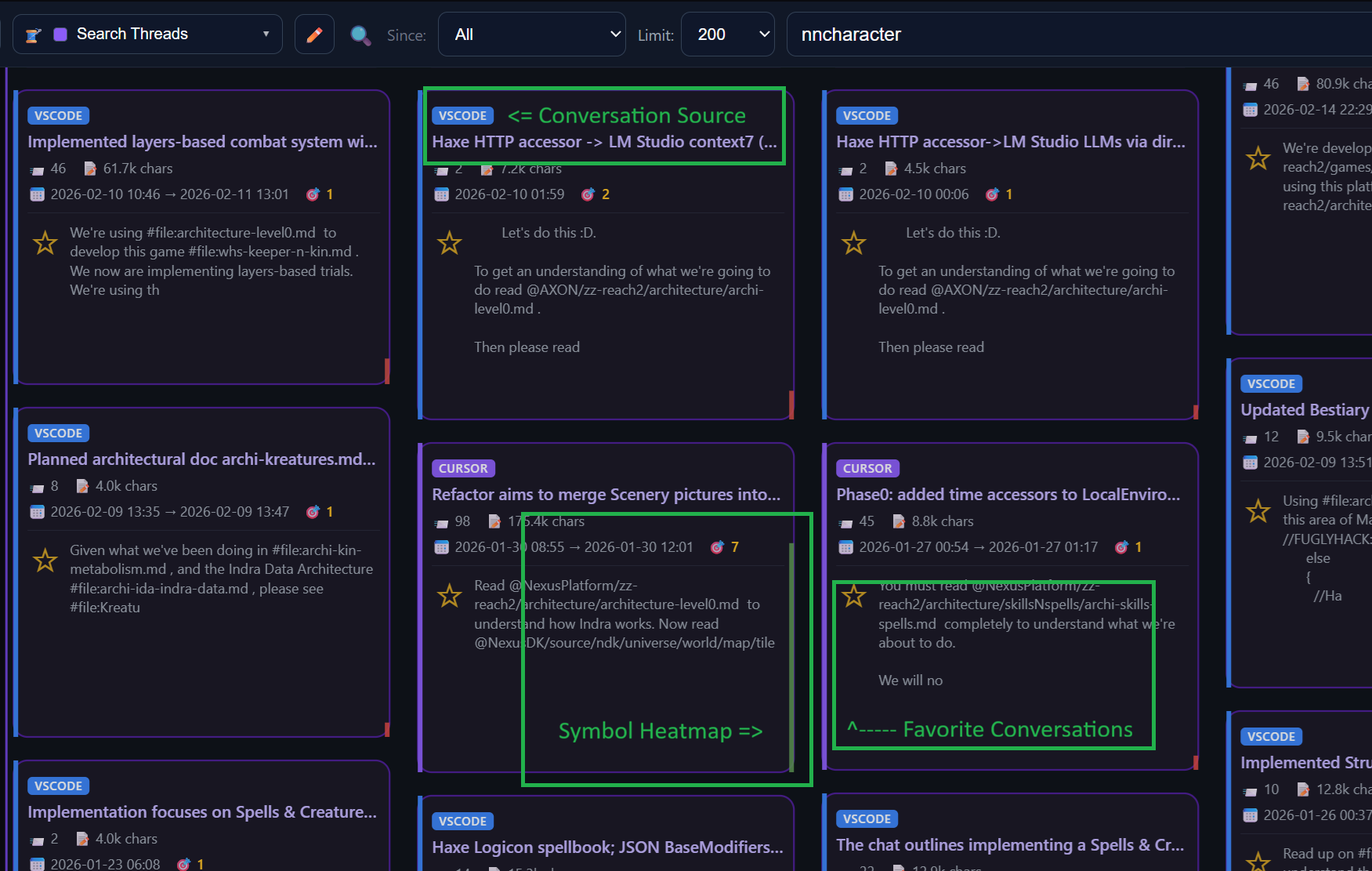

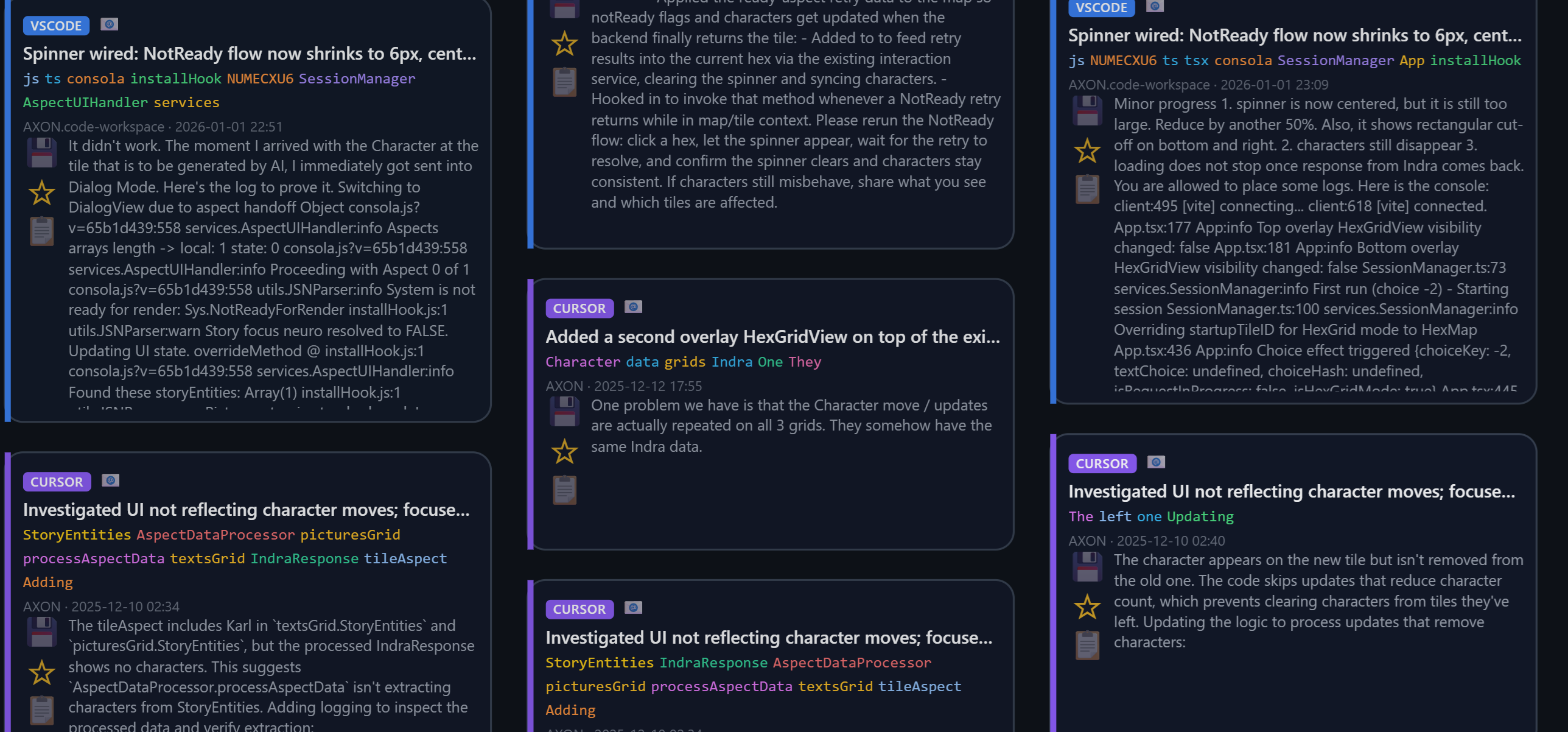

Interactive Visualization

A D3-powered zoomable card map. Explore your history spatially, save favorites, and spin up AI agents directly from your past chats & indexed project knowledge.

Skills & Memory Manager

Package repeatable engineering workflows into reusable skills and memory assets so future agents can apply your team's proven thinking patterns.